CloudServerless Architecture: Cloud-Hosted Front End

By: Ben Stroud

Part 1 in a series, in which we cover the front end, the middle tier, and the back end.

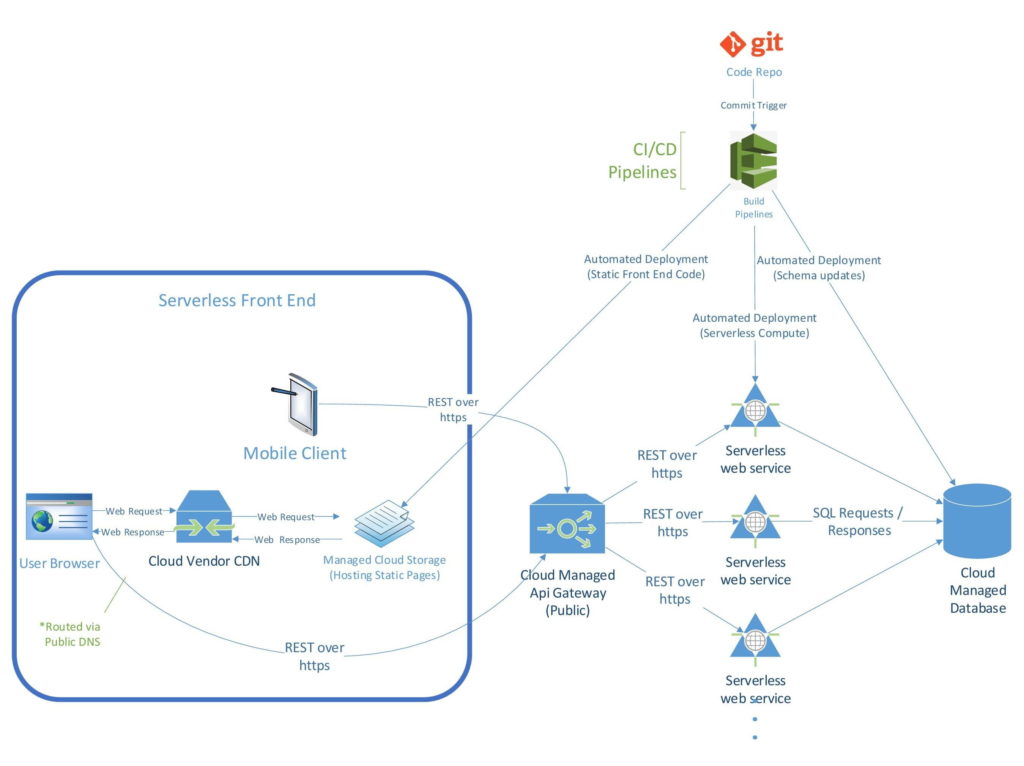

What does the front end of a serverless application look like? There’s much more than meets the eye when you are looking at the web page rendered by your browser. We’ll give you a comprehensive tour of what makes up the front end in a serverless architecture.

Let’s break this down to get a clear picture of what this means. Some of these items may be familiar, but others may not.

Static Site Code

Static site code is what is rendered in a web browser. The content shown is exactly the same for every user who loads the web page. Static site code is the front end of your serverless, Cloud-hosted application. (Note: In the diagram above, you’ll find the static site code in the upper right, under the green CI/CD Pipelines text. We refer to it here as “Automated Deployment (Static Front End Code).”

Static site code can be either built by hand or, more commonly, built using a higher-level front-end framework—such as Node.js, React, or JQuery—that automatically generates the HTML, CSS (cascading style sheets), and JavaScript.

HTML, CSS, and JavaScript are the only types of code that can be hosted this way. If your websites are written in PHP, ASP.net, or another high-level programming language, you must have them hosted on a web server (such as IIS) that understands those languages. The web server executes the high-level language at run time to generate what the site needs from the specific business logic (such as tables and user authentication).

Web browsers are designed to render only HTML, CSS, and JavaScript. So the idea of a static front end is to build a site directly in HTML, CSS, and/or JavaScript. Why rely on a backend server if you don’t need to?

It’s important to note here that having static site code on the front end does not mean you can’t have an extremely dynamic website. In this sense, the term “static” is truly a misnomer. It simply means that the front end is not “served” by a web server (such as a compiled .NET application), or interpreted and dynamically created by a web server (such as Perl). A static front end can have drop-downs, form fields, collapsing menus, logins, user controls, privileges, and any number of other interactive features.

Managed Cloud Storage

Static site code is deployed to Managed Cloud Storage. There are numerous companies that offer Cloud hosting, but these three dominate the market:

- Amazon Web Services (AWS) – has public cloud storage buckets (“S3”) that are similar to file folders on traditionally-hosted sites.

- Microsoft Azure – offers the same type of hosting as AWS, in their “Storage Blobs.”

- Google Cloud Platform (GCP) – offers the same type of hosting as AWS in their “Google Buckets.”

Content Delivery Network (CDN)

A content delivery network (CDN) decreases the amount of time it takes to load a web page, no matter where in the world it is being loaded. All Cloud providers offer CDNs, but the most recognized CDNs are from the top three Cloud providers (Microsoft, Amazon, and Google), plus Akamai:

If you have a site that needs to be global because you have users all over the world, then you need a CDN. The mechanics behind CDNs are simple. The CDN stores a cached version of your site in different geographical locations (known as points of presence, or PoPs) around the world. Every PoP has several caching servers that provide delivery of your site to web visitors within its area. Instead of pointing your domain to the S3 bucket, you point it to the CDN.

A CDN is a Cloud-provided service that is meant to be bulletproof. It scales automatically and can take millions of requests at once. Traffic spikes are handled easily, because all the traffic to your site is sent first to the CDN. All the S3 bucket has to do is serve files to the CDN, not to every visitor to your site.

This means that if you have a web visitor in London, they will pull your site from a PoP in London. If you have a web visitor in Mumbai, they will pull your site from a PoP in Mumbai. A CDN eliminates latency problems that those same web visitors would experience if they had to pull your site from a traditional-hosting server in Des Moines.

How the Front End Works With the Rest of the Serverless Architecture

The business logic for interactive web applications is hosted in a serverless compute environment (serverless services such as Lambda and function apps that can execute code).

Your static front end can’t execute code, but it can send requests to the business logic in the serverless compute environment where the data gets executed and returned as a response to the page. This is the standard way that interacting with these endpoints is done in a serverless application. The front end works dynamically by interacting with the middle tier (which we will discuss in the next article in this series).

Advantages of a ServerlessFront End

The primary advantage is that you don’t have to maintain a server. Of course the cloud itself is technically a massive collection of “servers,” but not the traditional VM (virtual machine) server that requires maintenance, updates, scalability, and human oversight. Your Cloud vendor takes care of all this. All you have to do is put the files in the bucket and set permissions. Then you’re done.

Another advantage is performance. Performance is improved because all the work is decentralized and the browser renders the dynamic elements of the application. There is less work at run time and the work is on the client side instead of on a server. With traditional web applications, performance is often an issue because you can have a lot of people (millions!) hitting the server at the same time.

Speaking of performance, another advantage is the ability to use a CDN. A CDN further optimizes performance by distributing the load in your serverless environment to PoPs around the country (or the world) that allow users to access your web application.

Is Serverless Architecture for Everyone?

The total cost of ownership of a well designed serverless application should be significantly lower than a traditional server-based application, for two key reasons. First, there is no server to maintain, and server maintenance costs money. Second, a serverless application is pay-as-you-go; you only pay for the “compute power” that your application actually uses. So it would seem that all applications should be built this way, right?

It is important to remember that a serverless architecture requires an entirely new skillset (besides programming languages, you also need to understand cloud storage solutions, native cloud development, and CDNs). If your team doesn’t have these skills yet, learning them will certainly carry a cost. So it doesn’t necessarily make sense to rush out and convert all of your traditional client-server applications to be serverless.

Further, if you are building new applications that are relatively small or do not have to serve a large or geographically-diverse user base, then the ramp-up time to learn serverless-architecture skills may not be warranted. And finally, some applications simply are not well suited for a serverless architecture, namely if they have high-intensity functions that will operate more efficiently on a dedicated server (or VM).

That said, serverless architectures are clearly the wave of the future. It is ok if you aren’t there yet, but at a minimum you should be educating yourself and figuring out how you can become serverless sooner rather than later.

Check Out Our Other Content

Back to BlogsServices

Resources

© 2026 Winmill Software. All Rights Reserved. Read Our Privacy Policy.